Here’s a koan for your day: How do you express multiple dimensions when you’re stuck in only one? A few companies like ROLI, Expressive E, Sensel, and stellar designers like Roger Linn, Keith McMillen, and the late Don Buchla (to name precious few) have tried to answer this riddle in the musical realm. With devices that take advantage of advances in technology allowing far more expressive interfaces to exist, these companies have created a new category of instrument.

The rise of multidimensional music controllers re-imagined a specific journey one was supposed to take to create sound. Our goal today is to get you closer into understanding what these instruments are, how they work, and how you can integrate them into your creative environment.

The Case for Multidimensional Instruments

Let’s go back to our previous Beat Connection post. In it, we covered how the rise of MIDI, a technological standard created to digitally communicate how electronic instruments interface with each other, would eventually discover holes in the underlying framework present in keyboard interfaces. You press a note and the path from key strike to sound output was linear. It seemed there was no need to account for anything deviating from 0-1.

The rise of guitar MIDI controllers, breath controllers, and other nonlinear, non-keyboard instruments from the likes of Buchla and others who deviated from the piano-based model revealed that sending all this information through only one pipeline — in this case on MIDI channel — was limiting. Expressive instruments like guitars, brass, etc. have multiple strings, holes, and other design ephemera, that allow the player to separate out different notes, different tonal clusters, and have these express different sonorities and affectations.

MPE (MIDI Polyphonic Expression) took cues from guitar MIDI controllers and other non-linear instruments by multiplying the amount of communication data one can squeeze out of a single electronic instrument. Now we have synthesizers from Ashun Sound Machines, Modal, and others that can, on a per-note basis, understand what exactly your controller wants to express at a much more granular level. So, what does one need to express thyself? A multidimensional controller.

Thinking in Multiple Dimensions

For the purpose of this post, as such devices differ in design, we’ll use one controller — ROLI’s Seaboard Rise — to present the possibilities available with multidimensional controllers.

First, let’s take a look at ROLI’s Seaboard design. Notice how the traditional black-and-white piano keybed we all know and love is replaced with what is a ridge-lined, spongy, tactile surface. The reason this is done is so that this controller could register five different touch-based expressions: pressure, velocity, glide, slide, and lift.

Why five? In your head imagine a violin. Hold that violin in your memory and pretend you’re playing it. What do you see in your hand? A bow. As you apply pressure from the bow onto the strings, you’re able to slide around notes, producing a violin sound. Quickly lift and lower the bow onto the strings. What was once a gliding sound is now succinct and staccato. You’ve manipulated the expression of “lift” to vary the attack and release of the violin’s tone.

Now, do the opposite: don’t lift the bow. Simply glide it across the strings. Apply more or less pressure. Appreciate the change in sustained volume. Then, with your other hand on the fretboard, wiggle your fretted finger and introduce vibrato to the violin’s tone. In your mind’s eye you’ve just realized what five dimensions of expression makes possible.

One Finger, Many Levels

Multidimensional controllers, when paired with receptive MPE synthesizers and/or software, are able to direct each one of those expressions to a bevy of controls on a per-note basis.

Think sliding up and down a surface makes sense to control pitch? On real-life instruments like the Hydrasynth or software-based plug-ins like the Madrona Labs Aalto (a perfect Buchla-esque deviation) doing such a move creates that outcome. The beauty of multidimensional control comes when you combine multiple expressions at once.

Related: Beat Tools: MPE (MIDI Polyphonic Expression)

Take your first steps into the world of heightened control over your MIDI instruments | Read »

In my previous post I spoke about how I discovered a way to mimic the sound of a slide guitar using a ROLI Seaboard and a Hydrasynth. Breaking it down requires me to glide from note to note with one hand while playing singular notes with the other. A slide guitarist has multiple levers and two hands to accomplish that glowing tone that adopts a pitch only to massage itself to another. Doing so on a multidimensional controller simply means mimicking the expression of another instrument.

With a sustain pedal at the ready, the MPE-protocol allows each expression to get its own lane, freeing us to think of each finger as an independent controller. One finger, in theory, could express much more than ever before. Five-axes of control is three more than any other classical keyboard instrument ever had.

The Five Axes of Multidimensional Control

To understand the possibilities available in multidimensional control one has to view the granular methods of control and how they affect a sound source.

Pressure

Pressure is the force exerted by something (in our case, a finger) on something else. Unlike velocity, pressure can be either continuous or amorphous — up or down. With multidimensional controllers, pressure values are usually used to adjust things like LFO rates and/or volume-affecting sound effects.

If you can think back to basic sound theory, pressure (or varying degrees of pressure) is the bedrock of creating “tremolo” effects. Likewise, this same expression is used to affect aftertouch, as pressure is the weight added to something’s initial velocity.

Need more of a certain sonic sweetener? On a multidimensional controller, pressure is typically used to apply modulation after the first “key” touch. In the sound example below, recorded on MOTU’s 828es audio interface, I use ROLI’s Seaboard Rise and the Modal Electronics’ Argon8M wavetable synth to give you an idea of what this movement hopes to achieve.

Lift

With known pressure comes the opposite expression: lift. Lift is how quickly one removes pressure from a surface. Think back to the previous thought exercise. If you’re a violinist how quickly you lift your finger affects how you can create different articulations.

Multidimensional controllers try to direct this control to affect “brightness” or resonance. Subtle sound sculpting allows lift to control the “urgency” or verb between notes. Below, you get an idea of what this expression does.

Velocity

The speed with which one strikes a note and its sonic effects should need no introduction but here we are, giving you a different look at this term. Velocity is a classic form of expression and multidimensional controllers incorporate it as seamlessly as you think.

Although you might not see keys on a multidimensional controller, (you might even be striking a surface that varies from the norm) most surfaces treat the velocity of your initial strike as a control over the volume of whatever “note” is struck. Depending on the synth or MPE device, velocity might affect the timbre of the instrument. For example, certain mallet instruments resonate longer or have quicker releases depending on the velocity of your strike.

The following example gives you a short taste of what strike/velocity is.

Slide

What makes “slide” different from the expression it precedes? Slide is a lateral movement that goes up the y axis — allowing one expression to easily affect sonic ideas that make sense in lateral value.

These lateral values can include how much modulation one wants to add to a sound. Because these parameters typically start at 0 and end up or down on a scale,the gesture of sliding laterally makes sense as an expression.

Glide

We round out our explanations of multidimensional expressions with one of its most electronically unique forms: glide. For those that have picked up a string instrument, glide is nothing new. The way one moves a finger from one fret to another is the bedrock of how most expression in such instruments begins.

Glide in multidimensional instruments is no different. In the embryonic days of electronic keyboard instruments, controls like the pitch wheel and ribbon controllers were the only means performers had to create the glissando or vibrato-like affectations of strung instruments. Of course, due to the nature of these controls, one hand would have to dedicate itself to adjusting these controls, leaving the other free to trigger notes. The design of multidimensional controllers gives them the flexibility to provide unfettered surfaces where one (or more) fingers can move horizontally. The MPE standard allows each note to have its separate value path.

In a horizontal, x-axis movement, glide moves between pitches. As any musician knows, it’s between pitches where one gets expressions like vibrato and can affect microtonal pitches that aren’t part of a standard stop. In the example below, I use the ASM Hydrasynth and ROLI’s Seaboard Rise to show the expressive element of glide controls.

Multidimensional Expression In A Two-Dimensional Software Environment

What good is multidimensional control if it can’t live outside your keyboard? In the following section I’ll clue you in on how you can use MPE-compatible software like Ableton Live and Bitwig Studio (to name a precious few of the precious few) to connect multidimensional controllers to your setup.

Ableton Live

Ableton Live 11 software for the first time introduced MPE compatibility to the clip-heavy DAW. Before one can take full advantage of it, one must enable this functionality in the software itself. Here’s how to do so:

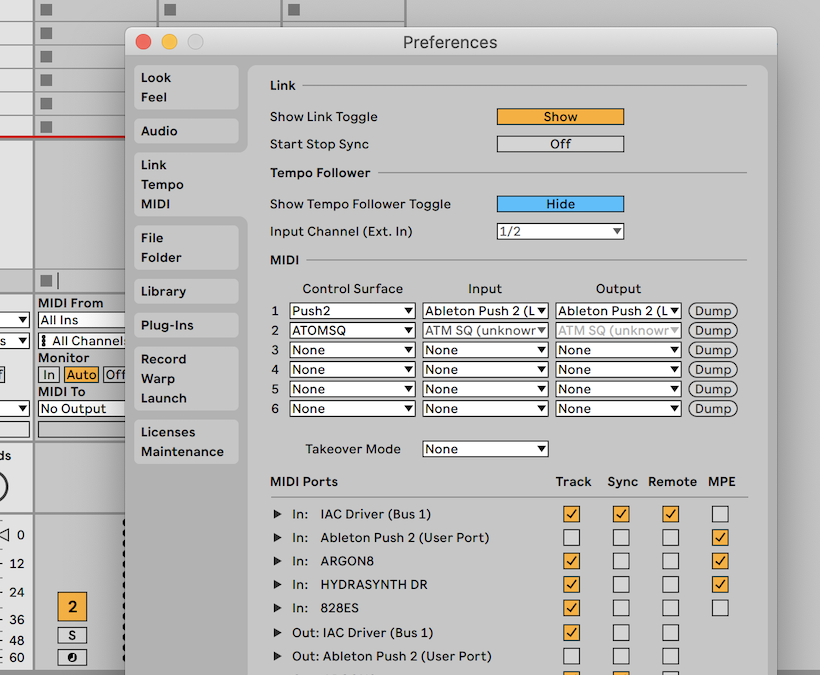

In Ableton’s Preferences pane, click on the tab marked “Link – Tempo – MIDI”. Under that tab, if you have any MPE-enable hardware, you’ll notice a new column under MIDI Ports dubbed MPE. By default all the checkboxes under this column are off.

However, if you tick on each box next to your hardware that is MPE-compatible, you’re now able to utilize multidimensional control once you load and arm such devices.

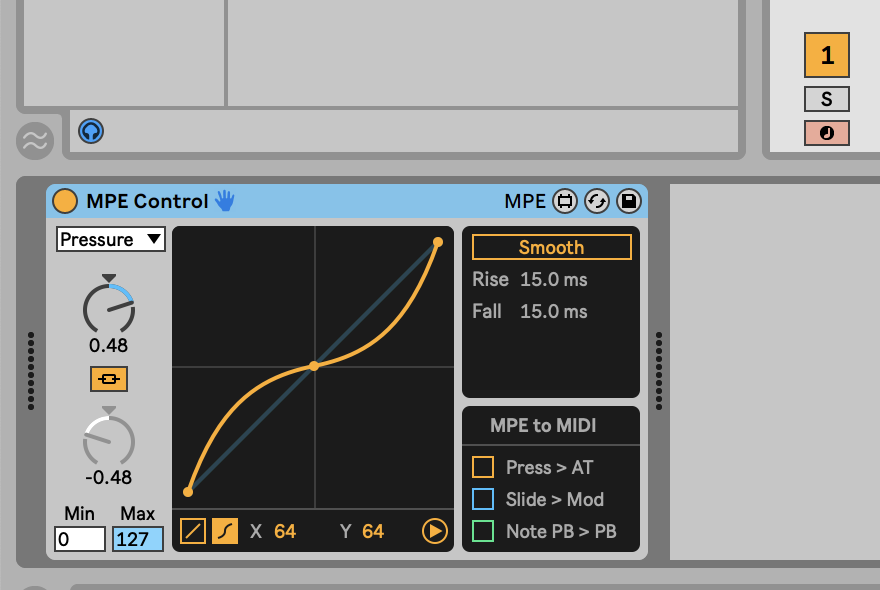

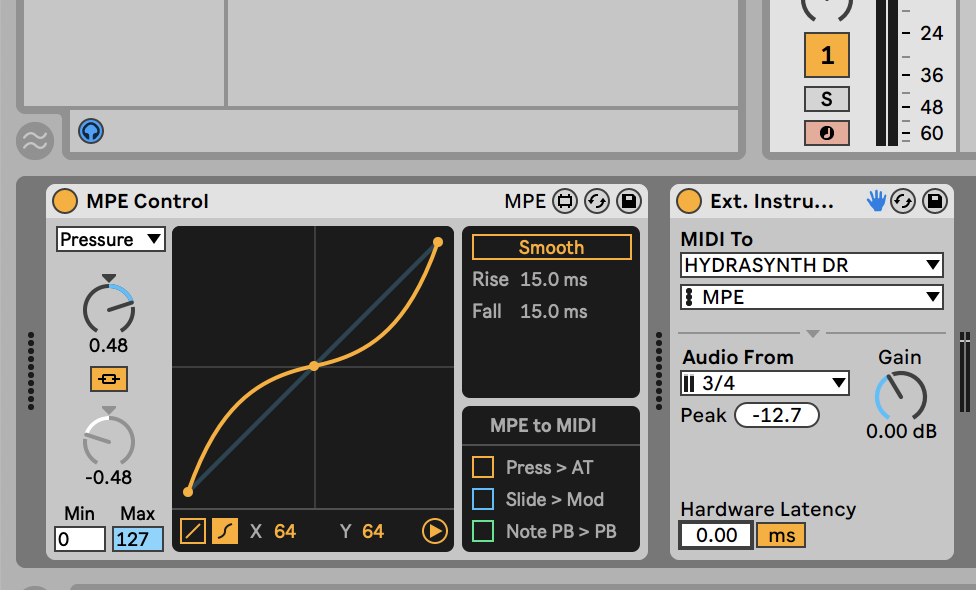

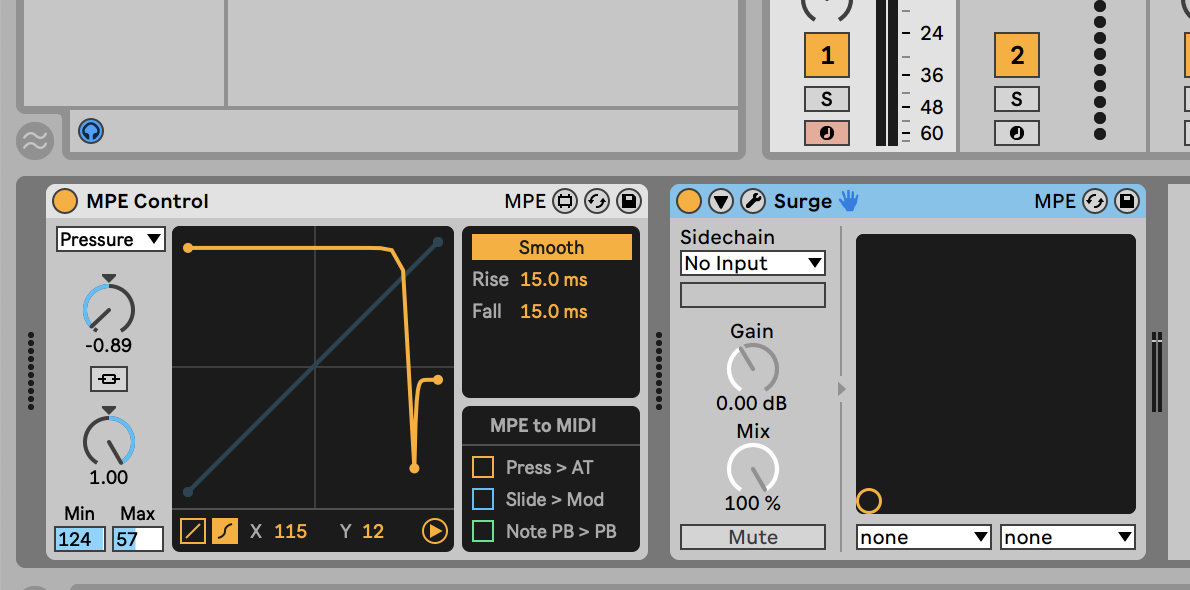

Ableton Live software now includes a MIDI Effect (MPE Control) that allows you to adjust the response curves or the range of multidimensional expression for Slide, Pressure, and Pitch. In theory, this means you can have a slide that curves downward as you slide up, among other things you can come up with. This control also allows you to instantly send certain expressions like “Pressure” to aftertouch, “Slide” to modulation, and per-note pitch bend to full-range pitch bend. It’s a welcome granular, control over what can be non-universal responsiveness across multidimensional controllers.

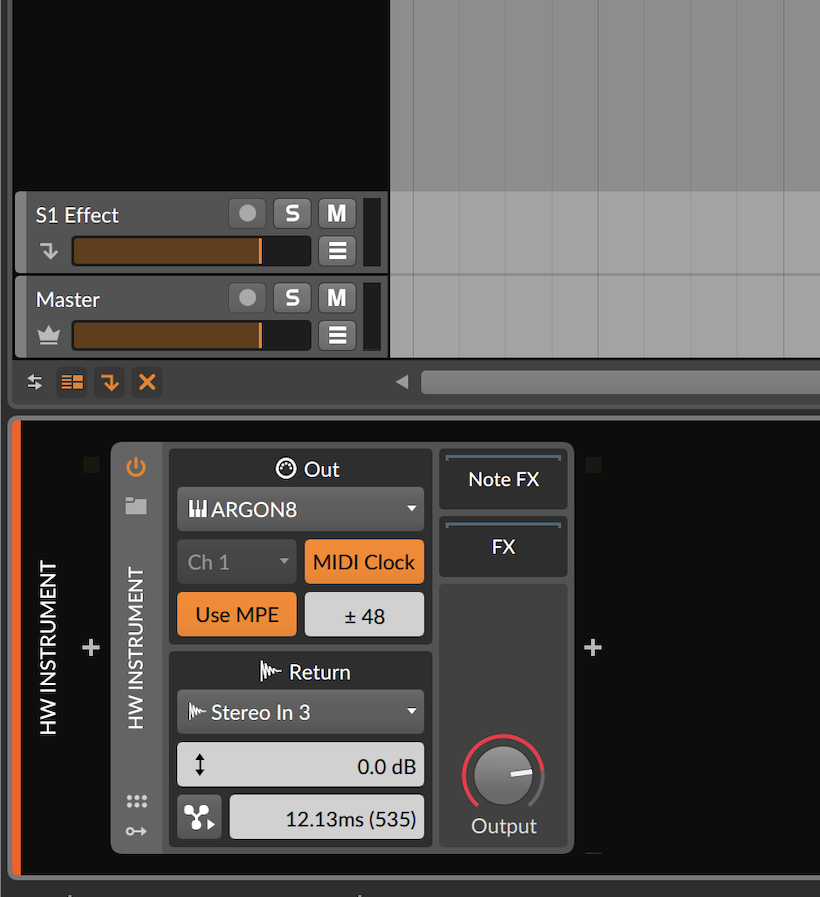

For those with MPE-compatible synthesizers like myself, loading an External Instrument into a MIDI track now gives you the option to select “MPE” to send MIDI To an external device. So, rather than struggle through the hell that was multiple MIDI channels to jerry-rig an MPE device, Ableton’s MPE send channel seamlessly transmits all the MIDI channels to one device.

As you can see above, any MPE-compatible software instantly is recognized as such and now bares an MPE signifier in its plug-in title bar. There’s no need to set anything as MPE. It just knows if a multidimensional controller exists in the MIDI receive, to handle it as MPE data that’ll feed this device.

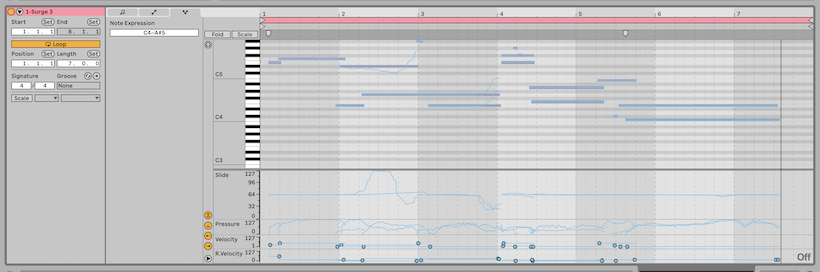

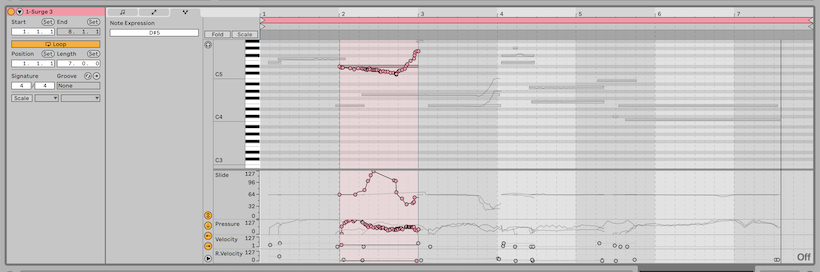

In Ableton Live 11’s new Expression tab you’re now able to view all the MPE expression you’ve recorded into a clip or onto the timeline. Slide, Pressure, Velocity (aka Strike), Release Velocity (aka Lift), and Glide (represented by note expression) are now viewable parameters one can adjust on a per-note level.

Now when you click on any note you can adjust the envelope of any expression or draw in your own, if you’re so inclined. Add this all up and this kind of granular adjustability gives you more singular control over multidimensional expression.

Bitwig Studio

Those of you with Bitwig Studio probably have enjoyed a more straightforward path towards MPE multidimensional enlightenment. Bitwig 3 was built with MPE expression in mind. If you have MPE-compatible hardware, all you do to make it appear as an MPE device is to drag a HW Instrument device into an empty track.

In any instance of a hard device you are given the option to Use MPE and enable multidimensional expression for the output of that device channel. Doing so grays out the option for singular MIDI channels and allows you to set the pitch bend range for the device — a must to match what your hardware synth sends as its default pitch bend range.

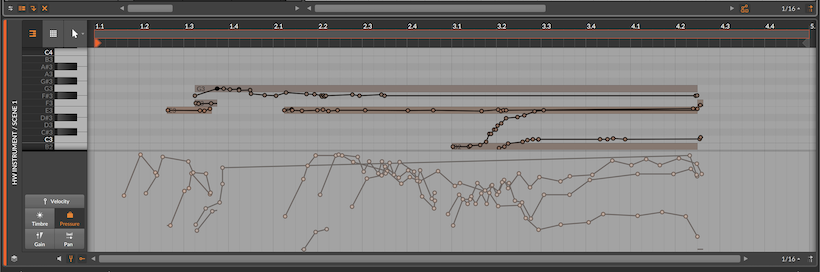

Here’s where Bitwig Studio deviates from Ableton. When you enable the Micropitch Expression view and show Note Expression in the clip editor, you’re now able to see Velocity and Timbre curves that pertain to “Slide” and “Glide”. Pressure curves default to “Pressure” expressions.

Exclusive to Bitwig Studio’s full-fledged modular DAW environment is the ability to treat MPE expressions as modulator sources. Meaning: Expressions like “strike,” “lift,” “pressure,” and “timbre” (which is a synonym for “slide”) are now fully assignable modulations you can use to control nearly anything you’d like within a software instrument — even one that is not MPE-compatible. In essence, you’re able to turn multidimensional controllers into beefier, wilder interfaces and integrate some of that multidimensional control into one-dimensional controlled synthesizers. In the screenshot above I used this Expression modulator to assign pressure to control resonance and oscillator shape, for example.

One of the brilliant features of MPE hardware and software is that it opens up the world of microtonal tuning to such devices. Since, by its nature MPE has to provide the ability to glide between pitches, this means the spec has to allow for precise anti-aliased or step-free intonation when doing such expression. This standard means that you can place a MIDI effect, like Bitwig Studio’s Micro-Pitch to “move” note spaces in minute increments. This in effect, laying the foundation for these instruments to be microtonal (however you wish!).

Multidimensional instruments take advantage of these features by being more fluid in how they send info that belongs between notes. All the expressions you’re used to by now track true to whatever new microtuning you use.

One final thing: Multidimensional controllers invite us to move away from what we expect a keyboard interface to look like. In Bitwig Studio you get a glimpse of that future via their On-Screen Keyboard.

Through its grid surface — if you have a touch-based OS like Windows 10 and a touch screen — you’re able to express various axis of expression like slide, glide, and lift (including pressure and velocity, if your computer has accelerometer integration), giving you a huge taste of what’s possible if you later decide to invest in a hardware-based multidimensional controller to master MPE-compatible devices.

For those that don’t own Bitwig Studio but own an iOS device: pop open the Garageband app, open Settings, tick on Support MPE Controllers, and play around with their built-in keyboard on one of their built-in synths. Nearly everyone out there can get a glimpse of the future this way, too.

Leave a Reply